If you have ever tried to download weather data for your specific location(s), timeframe and format needs you will know that it is a challenging exercise. Most of the time the solution is for you use the API to build a script or using coding to create this set. This creates additional cost, time and maintenance to get it right. Visual Crossing as a part of its Query Builder has given users the ability to build a packaged weather dataset that can we be scheduled to update as you need and can be retrieved either through a Web URL link or simply downloaded. In this document, we will show you the details of how you can build this set for yourself. We will link to other documents that will show you how you can further load these datasets into Excel, applications, scripts, ETL tools and more.

Sign-in

The first step is to sign up for an account and login or simply login if you already have an account. Please refer to this document and follow along in the account creation process. Remember that free accounts are available and offer up to 1000 free records per day.

Query Builder

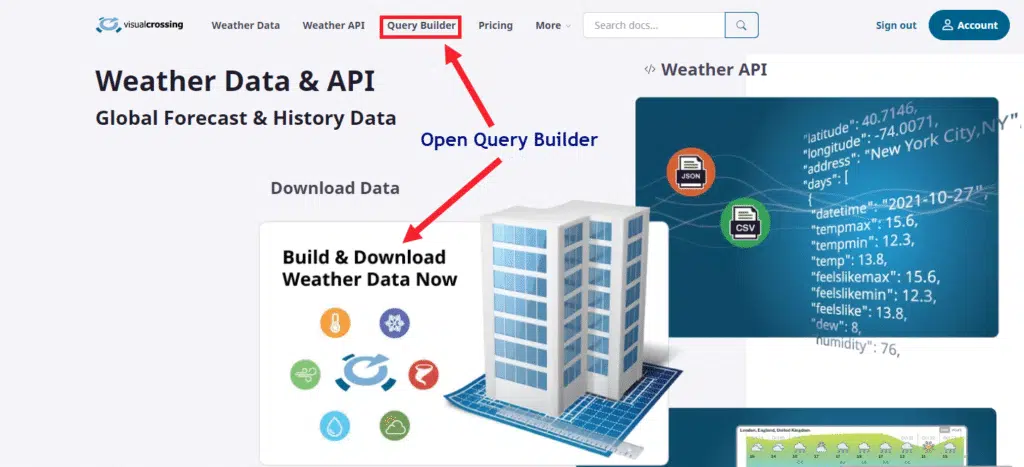

For this exercise we will use the Query Builder to create our the packaged dataset that we will download. To begin, login to your account on the Visual Crossing homepage and visit the Query Builder page:

By clicking on either of the two links on the home page you will open the Query Builder.

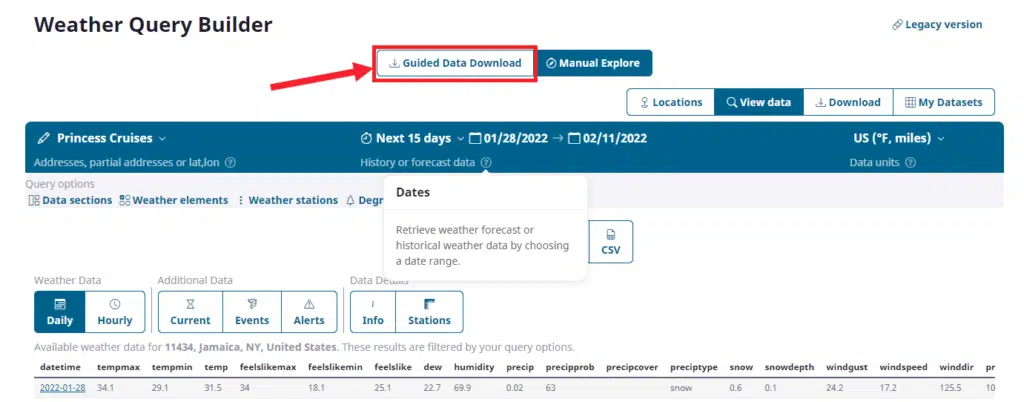

When you first visit the Query Builder page you will be in ‘Manual Explore’ mode. This mode is great for experienced users who know how to create a query but for our needs, we will click on the ‘Guided Data Download’ mode as seen above.

This mode will take you step-by-step through the creation and optional scheduling of your dataset.

Choosing the Locations

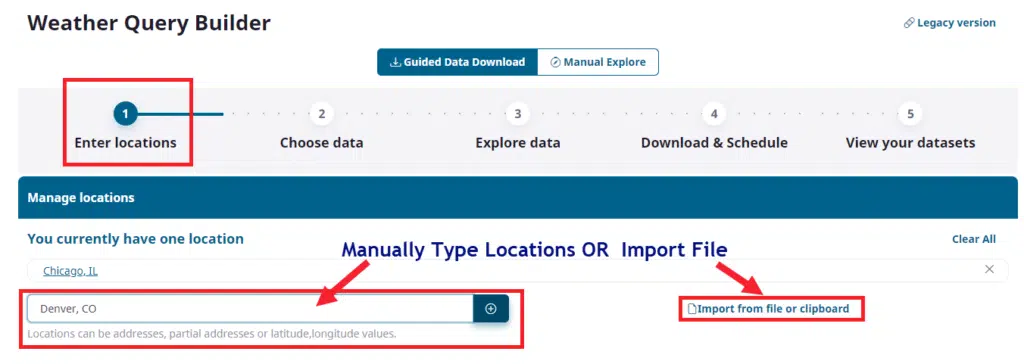

It begins in step 1 by asking you to define what locations you will be querying on.

Here you can simply type addresses, lat/long values, cities, postal codes and hit the ‘+’ icon to manually add locations to the list. The other option is to load from a CSV (saved from Excel) or paste from clipboard. We will choose to import our dataset from a file. Once we click on ‘Import from file or clipboard’ the following options will appear:

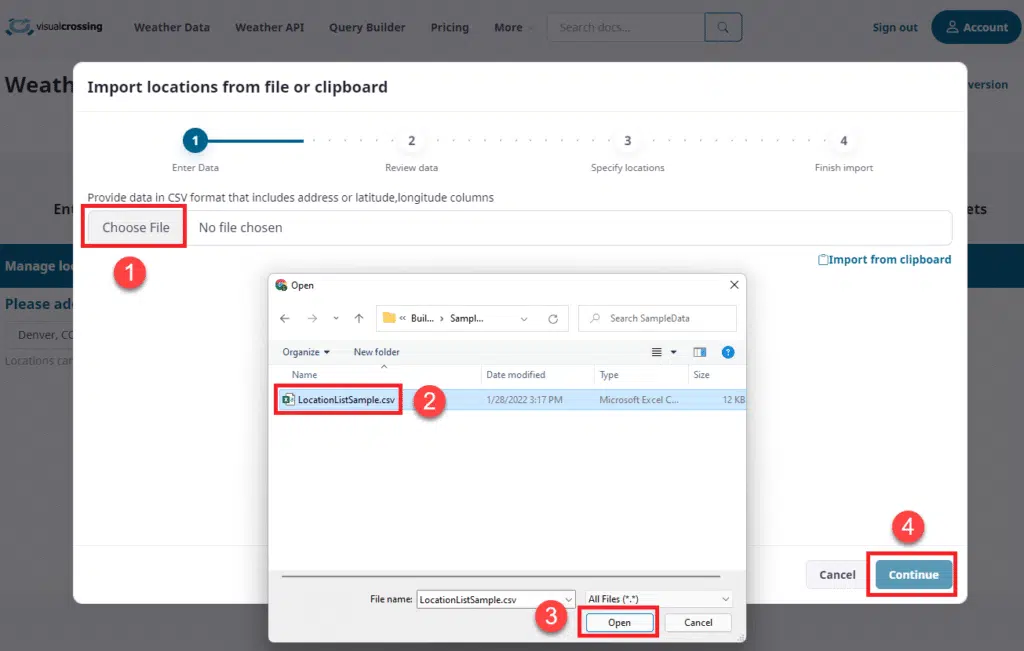

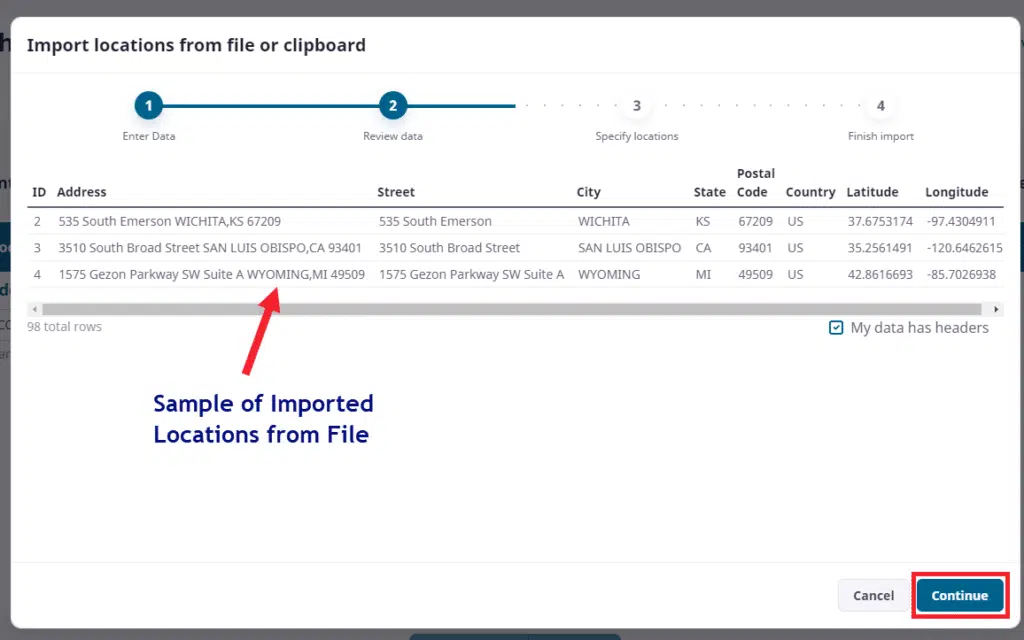

By clicking on ‘Choose File’ we open our system file chooser and select a dataset that has a column(s) with the address data for Query Builder to import locations from. Here we select our file and click ‘Open’ and ‘Continue’. As we can see below, Query Builder has read the dataset and will show us the data it has found.

This step is an important verification step and you should look at the imported columns to determine which columns will make up your weather location address data and which column will represent the name of the location. In our example above we can choose to import using lat/longs in two different columns OR we can simply use “Address” column and let Query Builder translate the address to lat/long internally.

NOTE: A word of caution is that lat/long is almost always the best choice if you have the data. Address strings have to be put through a geocoder and while modern day geocoders are quite good, in some instances they can make mistakes and you should validate that the address it translated is in the right location. This is especially true in remote countries.

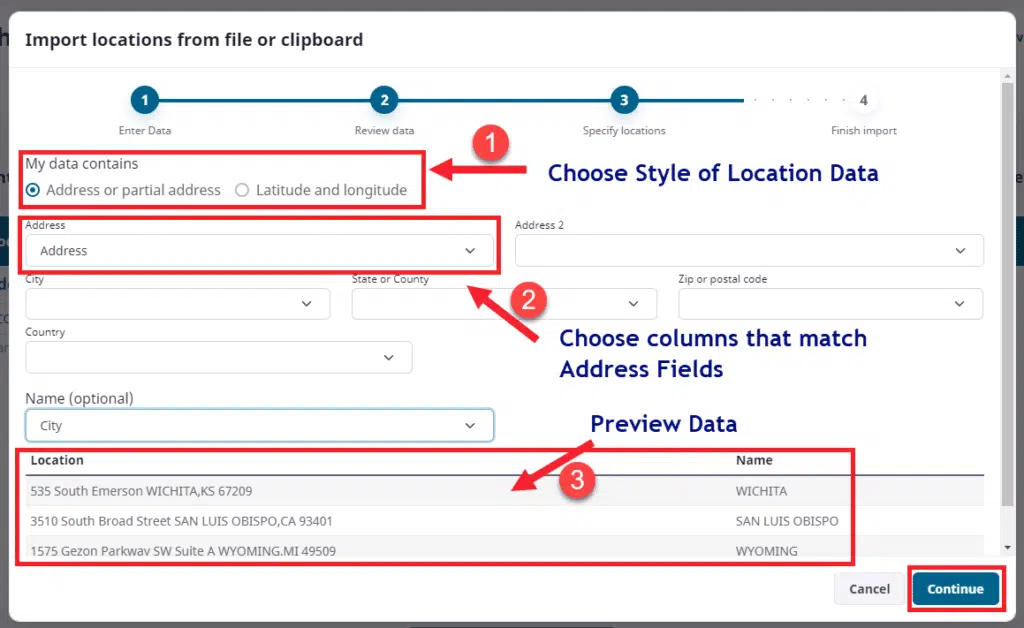

The next step is to assign our columns officially for both location and name.

In the above example we will choose to import address strings in step 1. In step 2 we assign our “Address” column to the Address field. Note again that we could have constructed address from multiple columns should our dataset not have a single field representing the address. In this scenario we only need to fill out one field and leave the others blank.

Also as a part of step 2 we chose to use the “City” column as a recognizable name for our locations. The user can choose any name column they wish, including customer ID, store Name, Address, etc… This field is used for two primary purposes. One is for human readability of the final dataset, but also if you need to join this dataset back to another matching dataset after you download and import it into your systems. It is best to make sure the ‘Name’ field is unique whenever possible.

Finally in step 3 above you can see what final values you are giving to the system as your locations and name with examples from your file import. This is key to making sure that you don’t have repeated string data and in general that the addresses look complete and accurate.

Now we can click ‘Continue’ and move onto our next step.

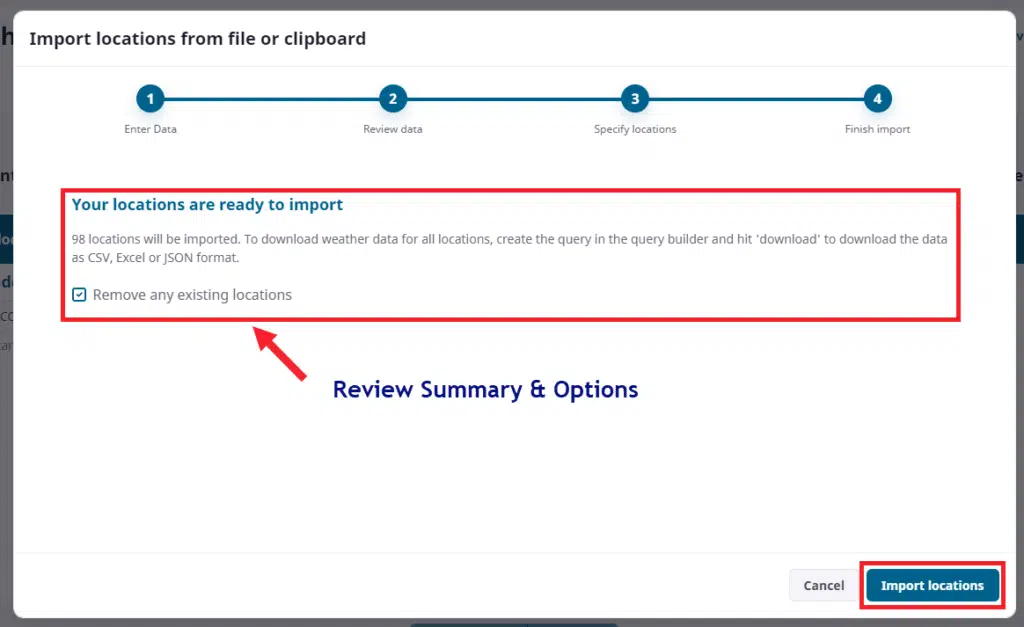

The final step on location import is to verify the number of locations and optionally ask the system to remove any prior locations you have from previous datasets. When you verify this information you can click ‘Import Locations’ to let the system complete its job.

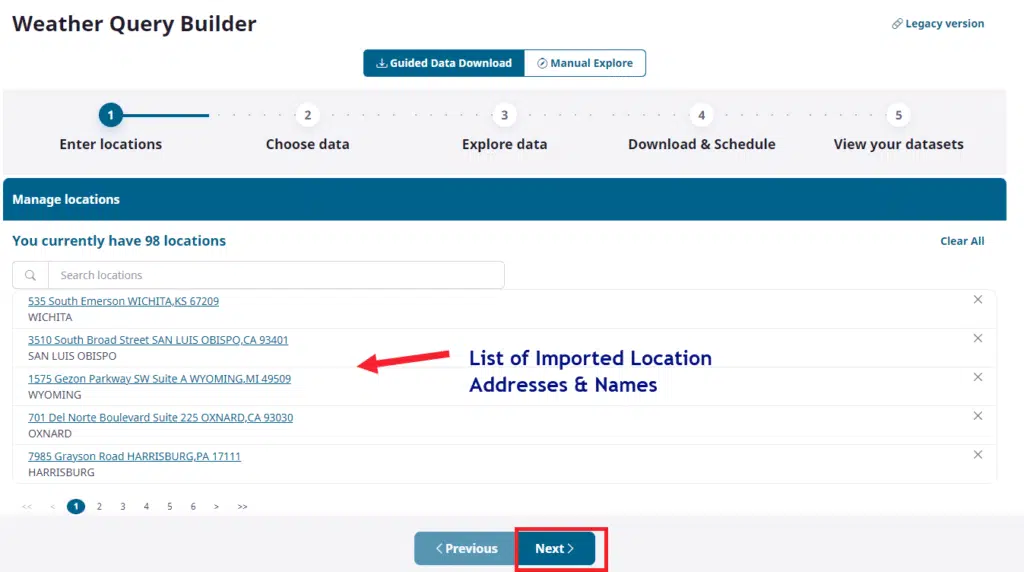

Once complete you should now see the list of all imported locations and your first step in building a dataset is complete.

Here you can scroll through all imported locations if desired for validation. Once complete, simply click ‘Next’ to move onto our data selections.

Selecting the date range and other options

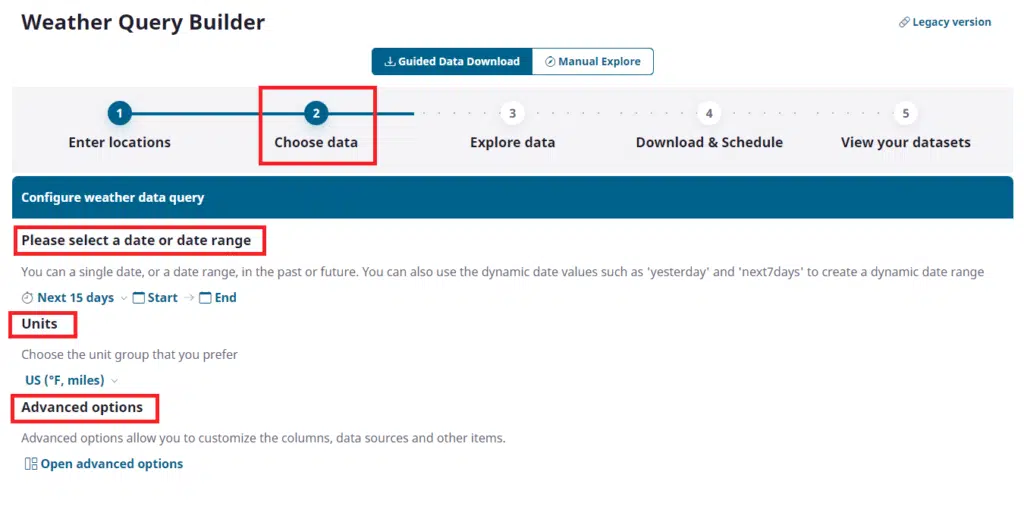

Step 2 of our overall dataset building task is to define what weather data we want to have in our Weather Dataset package.

We have 3 sections to fill out: Dates for our weather data, Units of measure, and advanced options to pick out exactly what data elements will be included in the dataset.

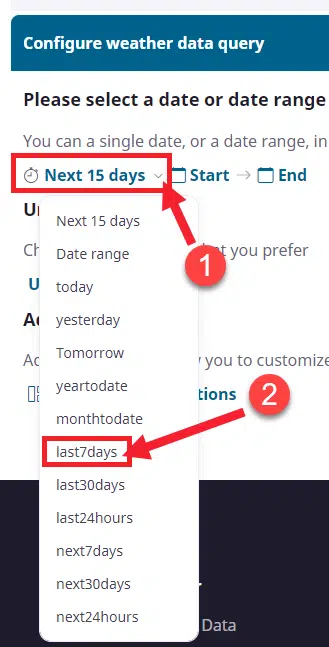

To pick your dates you can either choose from list of dynamic date macros such as ‘Today’ or ‘Last 7 days’ or ’15 day forecast’ OR you can choose to provide a specific date range from anywhere in the past (1970 or later) to the future. If you choose past dates you get station recorded data, if you choose 15-days into the future you will get model-predicted forecasts and if you go further than 15 days you will get a statistical forecast based upon 10 years of historical data for your location. The Timeline API that supports the Query Builder allows for a continuous timeline of weather. Let’s pick the dates we will use here:

For this exercise we are setting up a recurring dataset that will allow us to always get the last 7 days of weather data for all of our locations in our list. Above we choose one of the dynamic date macros called ‘last7days’. Every time this data set is run, either by schedule or manually it will pull the last 7 days of weather. Please note that it will only update when re-executed or if scheduled.

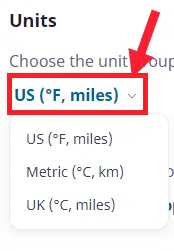

Next we will choose our units of measure:

We will choose US units but you should choose what is appropriate for your scenario. To understand more about our units please refer to this document:

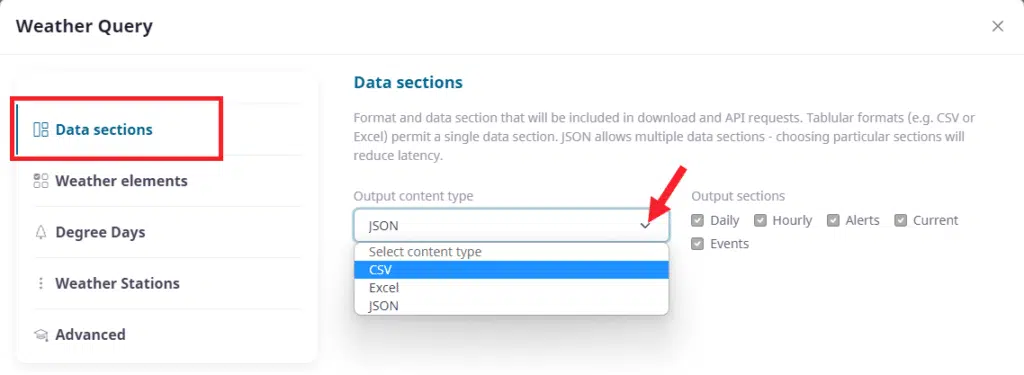

Finally we will get to pick the data we want to include in this dataset. This area contains the most information, much of which we will not be able to address in this basic tutorial. For this exercise we will make selections of specific weather variables and discuss where the data is retrieved from. Let’s start by opening the Data Chooser by clicking on ‘Advanced options’:

There are many pieces to choosing what data you want in your dataset and we will try to keep it simple. The first area is ‘Data Sections’ as we see above. Data sections are types of data you can have in your data set such as: daily weather, hourly data, alerts, events, current weather. We also have to choose the content type/format of the data. If you are a coder you will likely want JSON and if you are data analyst you will likely want CSV. If you work in Excel you can choose Excel or CSV.

JSON can contain all of the sections at once due the structure of JSON having a model whereas CSV is basically a flat grid of data separated by commas. In this exercise we will choose CSV as it is the most flexible and probably is the best use case for downloading files of data in packages. JSON is best for live-queried data directly from the server rather than building a dataset to pass around.

Once you select ‘CSV’ from the list you will be able to choose only one section of data. For this exercise we will choose the ‘Daily’ weather selection which will give us the daily weather totals for every single day in our time selection.

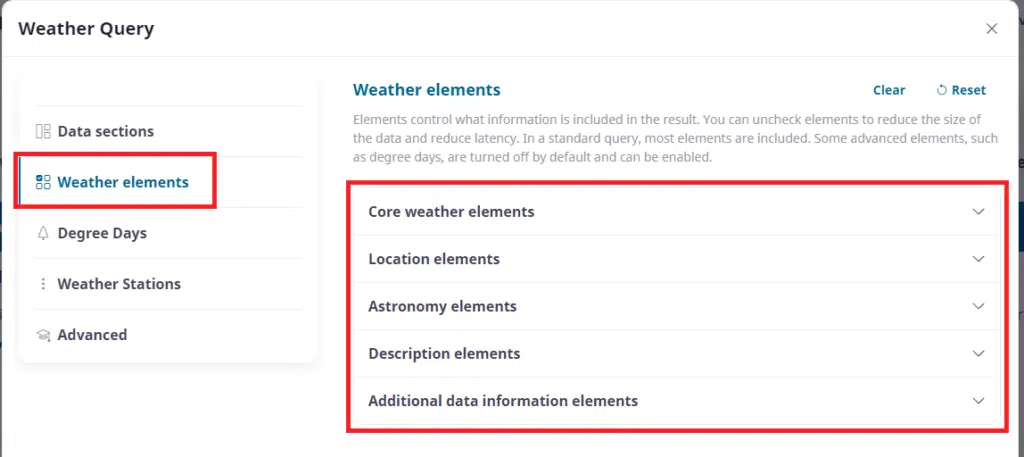

Next we will click on ‘Weather Elements’ to choose what columns we want in our dataset. We can see that the elements are brokend down in to 5 sections and we will start with ‘Core weather elements’:

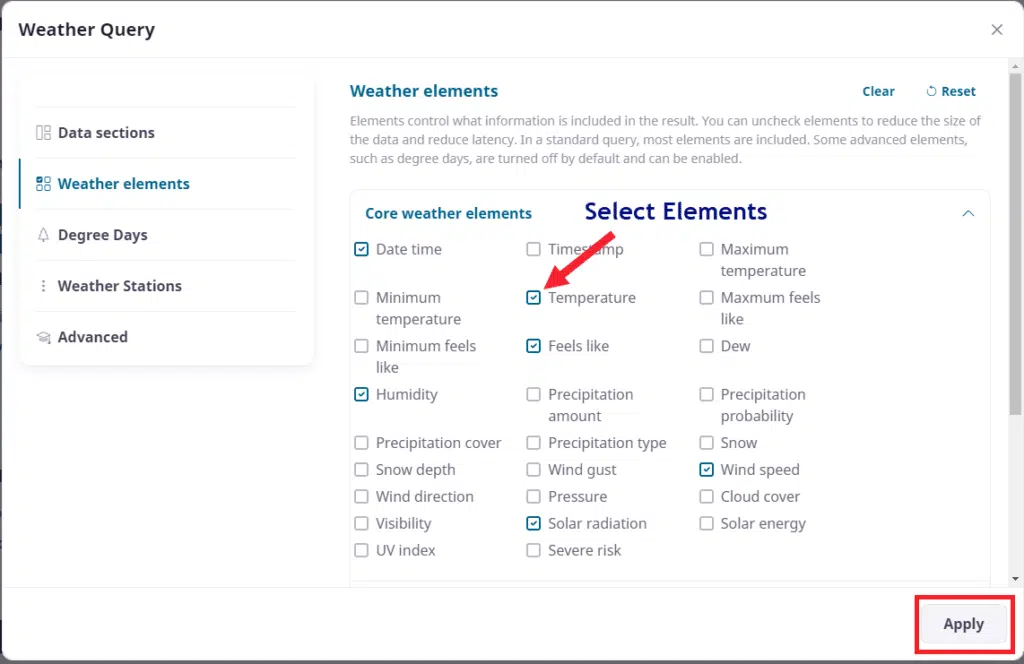

Opening the core weather elements we can begin to make our selections.

Here we can simply check/uncheck weather elements that we need. Be careful if you choose the ‘Clear’ option at the top as it will clear more than just what you see in the ‘Core weather elements’ section. Remember to scroll down and choose other columns of data such as our location columns and more.

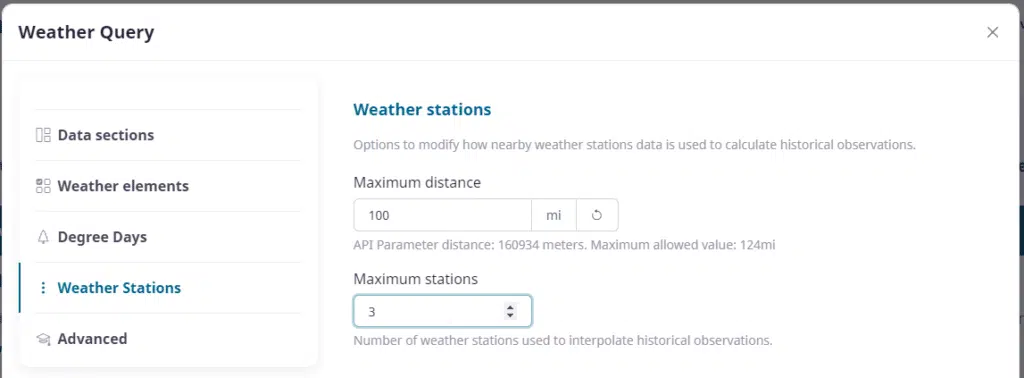

One of the most important areas of your data selection is the area to define station data. The Visual Crossing service is based upon a system of interpolation to give users a custom weather for their location. By default 3 stations are used to interpolate data for any position on earth. Users can define here how many stations are used, as well as how far our system can reach to use those stations. Rural locations may need to reach further than the defaults to retrieve data.

We will set our values to 100 miles and 3 stations. Remember that data is retrieved on an hourly basis and then aggregated to daily. So users of daily data may see more than 3 contributions due to the aggregation of hourly data.

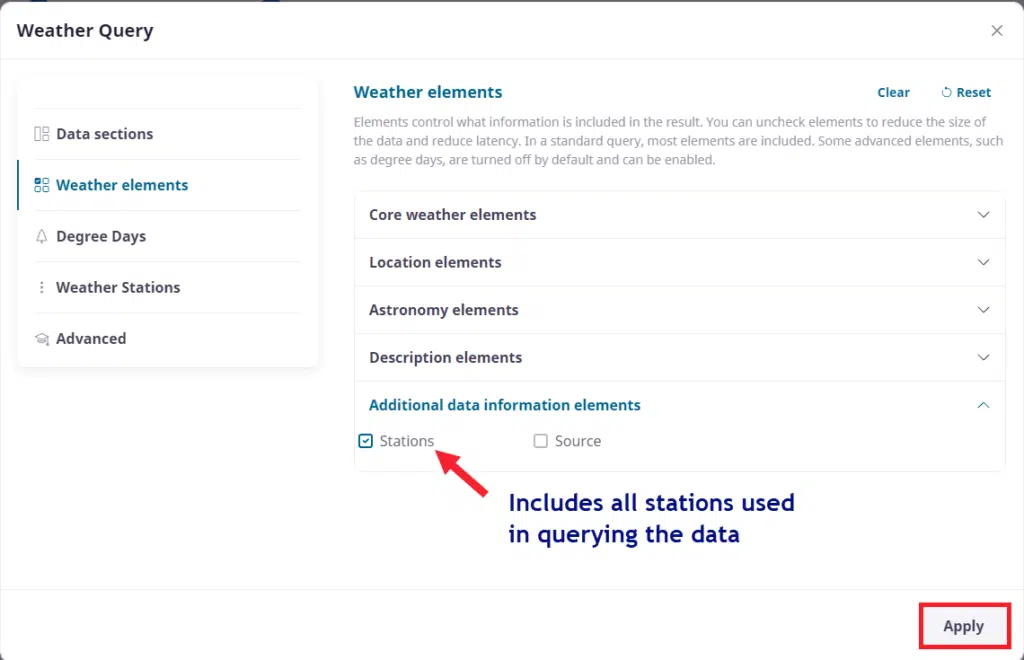

If users wish to see what stations contributed to their data they can go back and include station information as a column in the ‘Additional data’ section of the weather elements

We are now done with our data selections and can click ‘Apply’ & ‘Continue’ to move onto our data exploration step.

Exploring the weather data

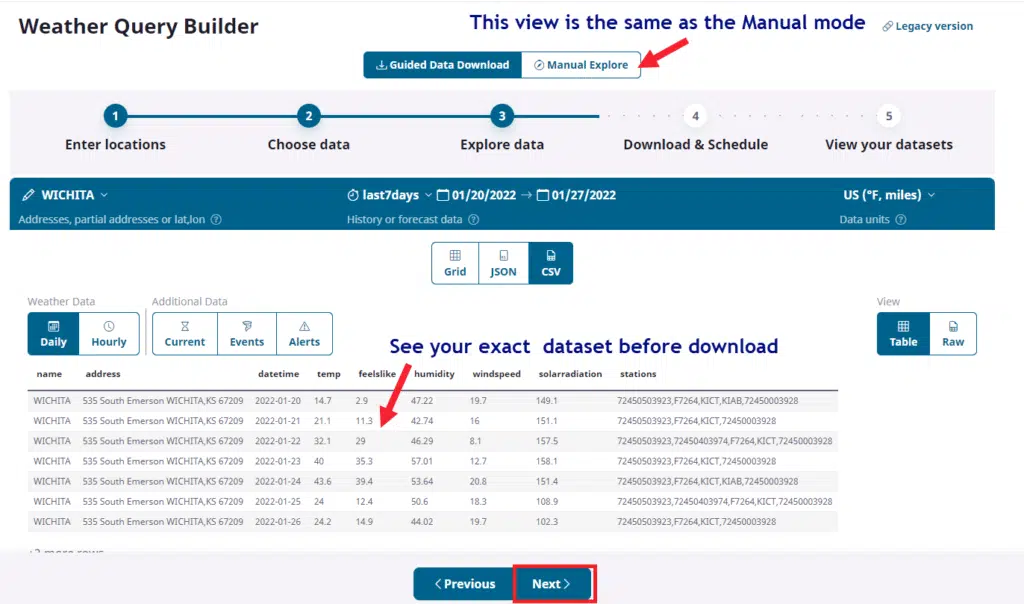

This step is a validation of everything we have done as well as an introduction into Manual Mode. Even though we are still in the Guided mode we can see all of the choices we made previously.

Note above that the Data Preview window has our exact query and a sample of the data that will return. In CSV mode we choose to view the data in this table format or the raw, comma-delimited format. If anything is incorrect we can use the ‘Previous’ button to fix specific items and return back to this view.

Please stay in our Guided mode and click ‘Next’.

Download the weather data

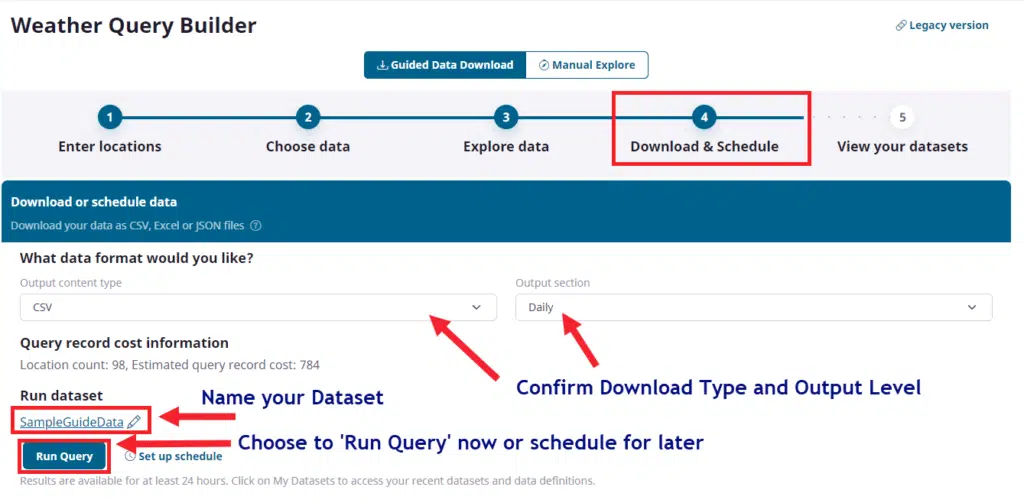

Finally we are ready to download our dataset! The Download step’s primary job is to confirm your data format, run the actual data query and let you download the data directly in your browser OR set up schedule which we will discuss separately.

Confirm our ‘CSV’ selection and our ‘Daily’ weather granularity selection using the two popdown controls. Next click on the name of your dataset and rename it as you wish. Finally, if you are ok with the purchase click on the ‘Run Query’ button and the system will execute your query. Depending upon the size and type of data this may take a few minutes to run. When complete the system will ask you if you wish to download the data:

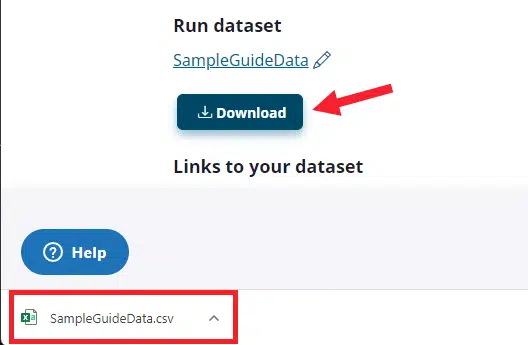

Click on the ‘Download’ button and the dataset will download our CSV file of weather data directly into the download section of our browser as seen above.

NOTE: Browsers will download to different areas of the screen so make sure you know where your browser puts downloaded files. Also note, that certain security levels may not allow this operation without your permissions.

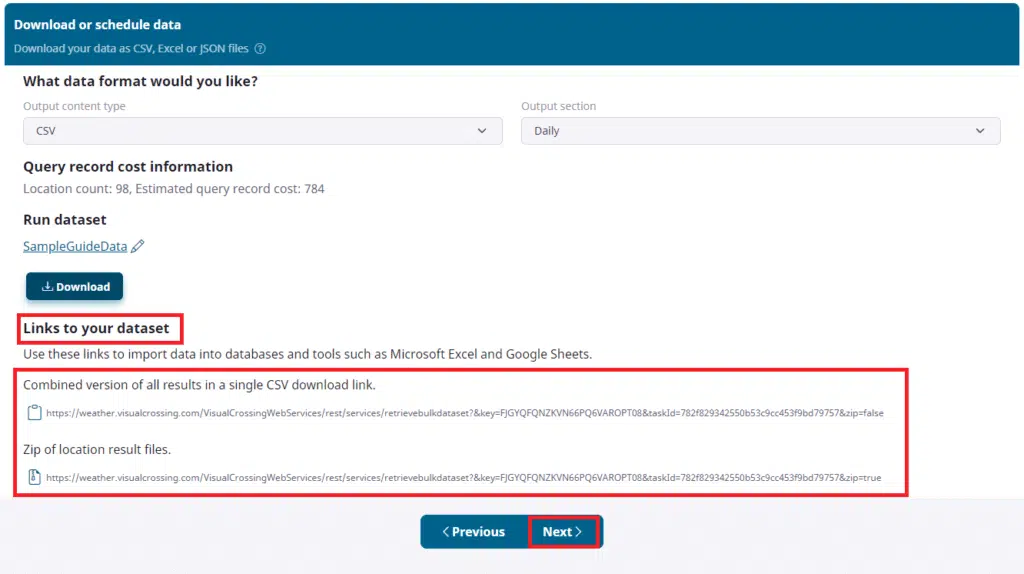

At the bottom of this section are URL links directly to your dataset. The links are only available after the query has completed.

As long as the dataset exists in the system you can use these links to directly access your file dataset. More on this below in the ‘MyDatasets’ section.

You should now have your dataset and you can click ‘Continue’ to move onto our final section ‘View your datasets’.

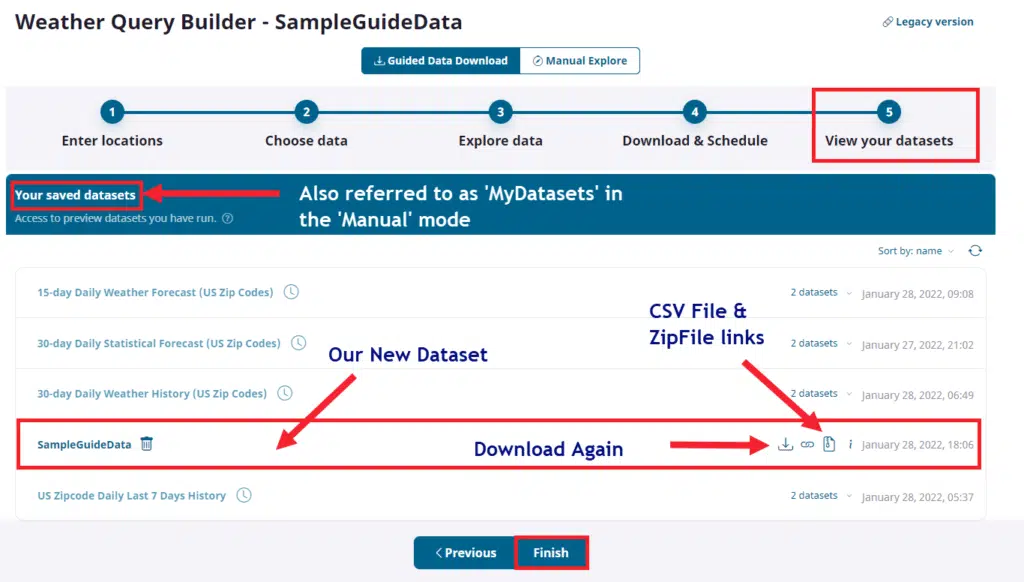

This section is also referred to as ‘My Datasets’. This is the location for all data that you have downloaded, but do note that datasets have a limited lifespan and will be removed based upon space and time. However, if you schedule datasets or want to re-download a recent dataset, you can do so from this section.

Also note that you can find URL links to this data set for use in Weather Workbooks or other applications that wish to stream the file contents directly using we Web URL. Please note that there are CSV links and Zip file versions and if you are downloading directly into Excel or an application, typically CSV is preferred.

Create a weather data schedule

One of the most powerful aspects of the ability to build a standalone dataset package is that you can schedule new versions of any dataset to be created daily. Your URL links will always point to the latest so any application that accesses these scheduled sets simply have to refresh the data to get the latest version.

Here is a document that will give you more information about how to set up a scheduled dataset.

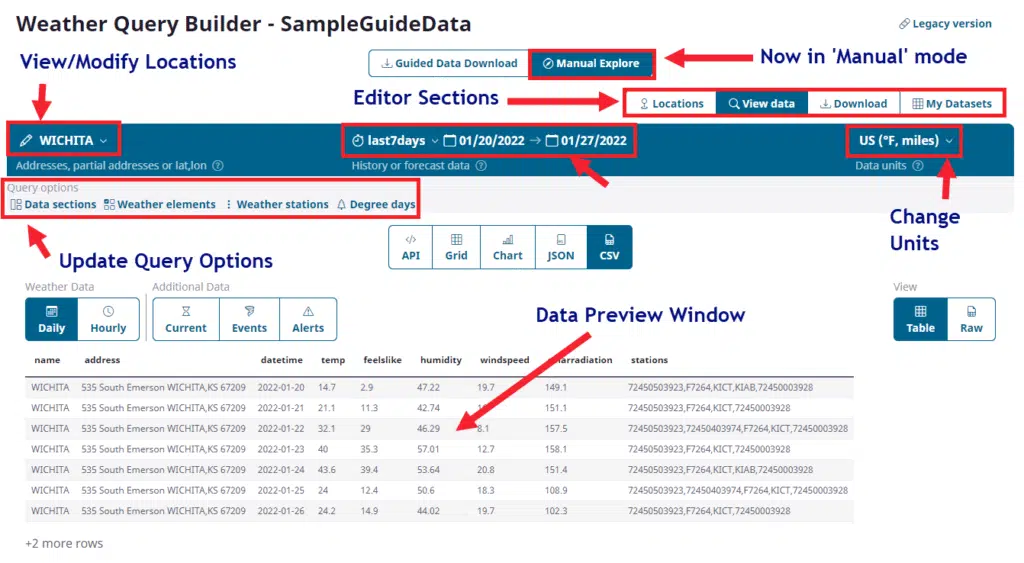

One final note, now that you know all of the steps in the Guided Mode you can graduate to using Manual mode and create your sets directly in the Explore interface:

Congratulations, you have now completed the creation of your first weather dataset! Please let us know if you have any additional questions at our Support Page